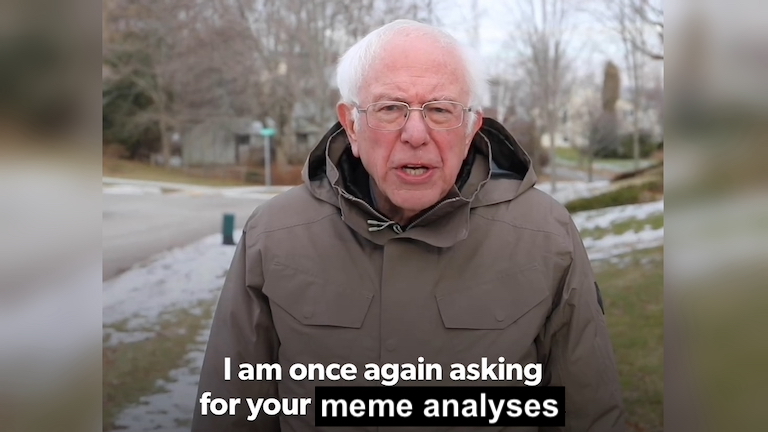

Call for submissions. Deadline: 31 October 2021.

We invite you to submit entries to the Great Canadian Encyclopedia of Political Memes. Entries will focus on a single meme and its relevance to the 44th Canadian election. The entry is a chance to explain if and how memes mattered in #elxn44. You might select an example because you think it:

- exemplifies the type of content shared in your sample,

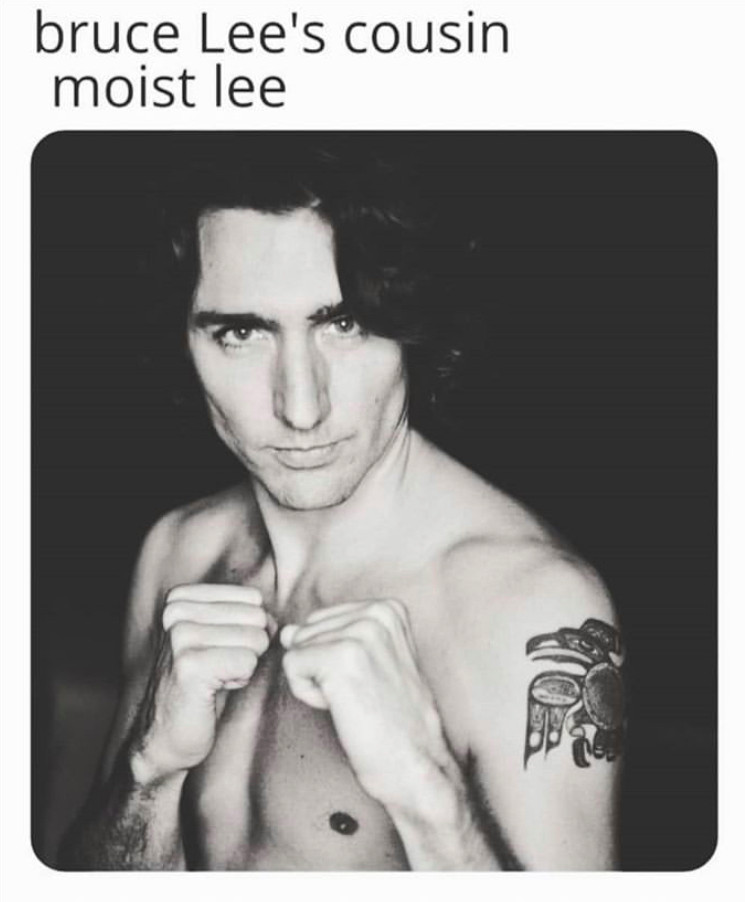

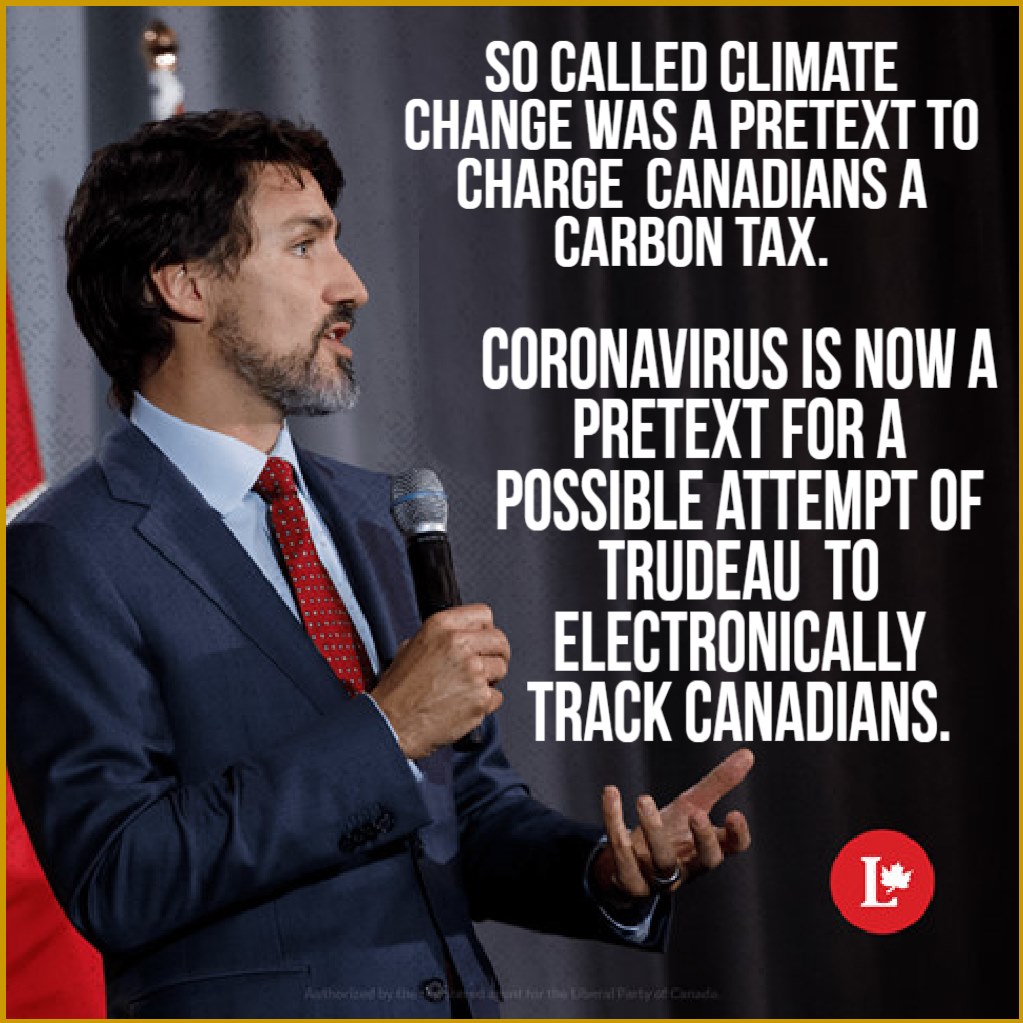

- influenced a party’s brand during the election,

- framed an election issue,

- was popular or shared a lot,

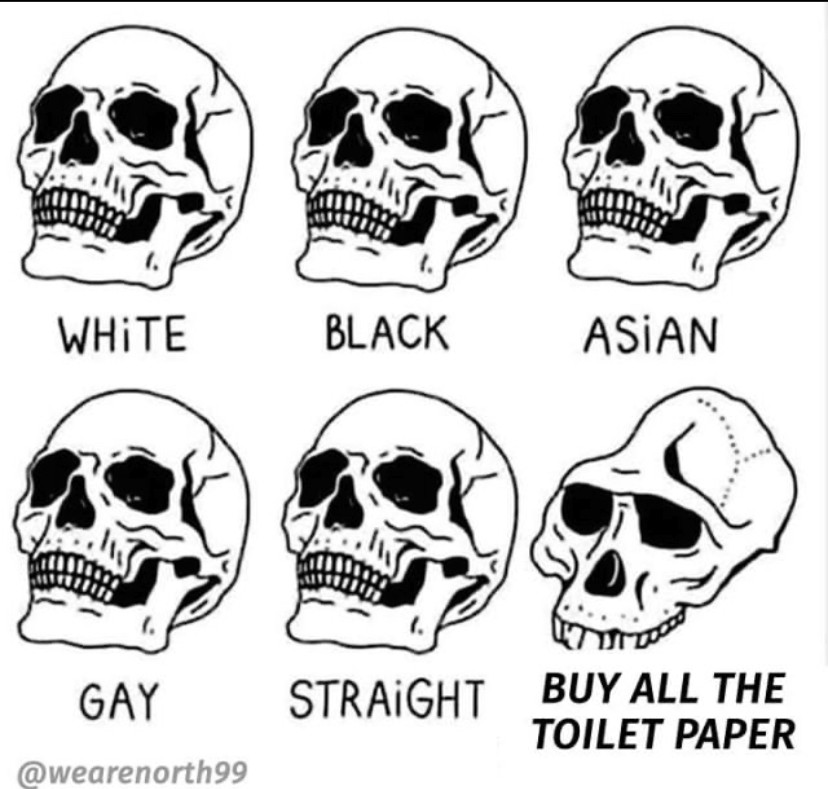

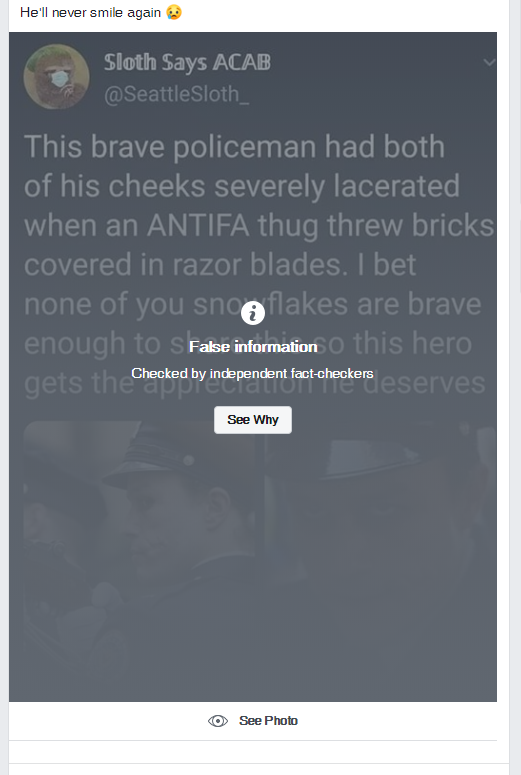

- transgressed community values or norms,

- connected different communities in important ways (from fringe to mainstream), or

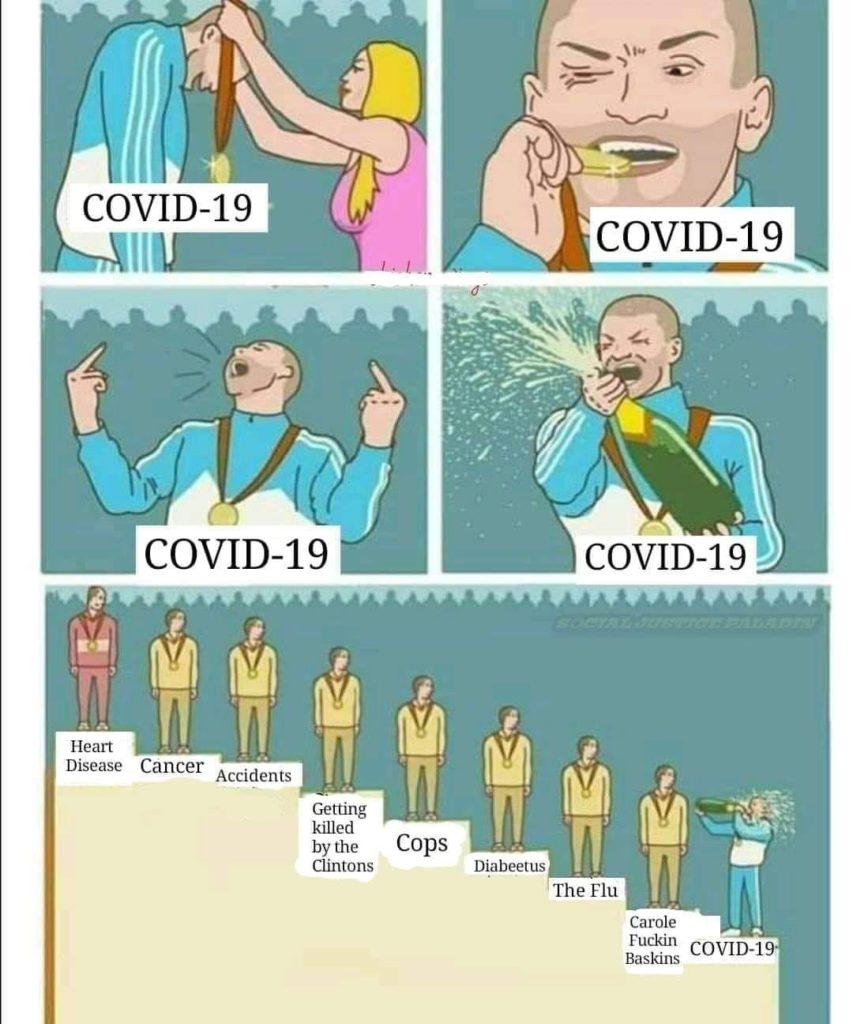

- iterated on the meme or memetic style in a distinct way.

Submissions should include:

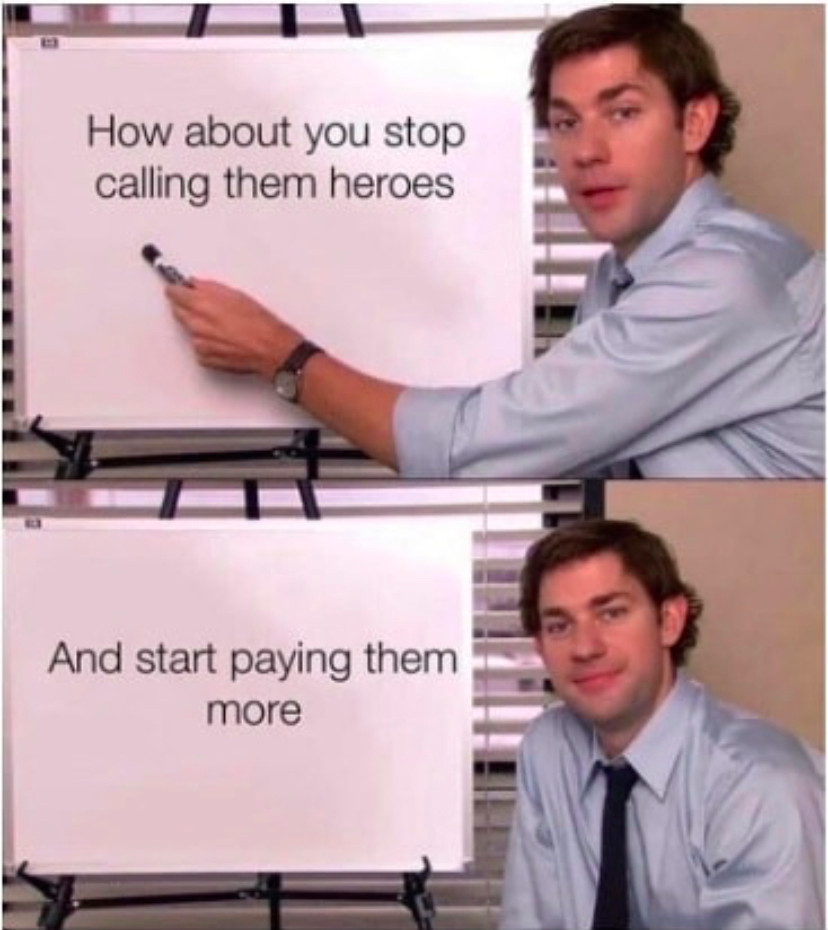

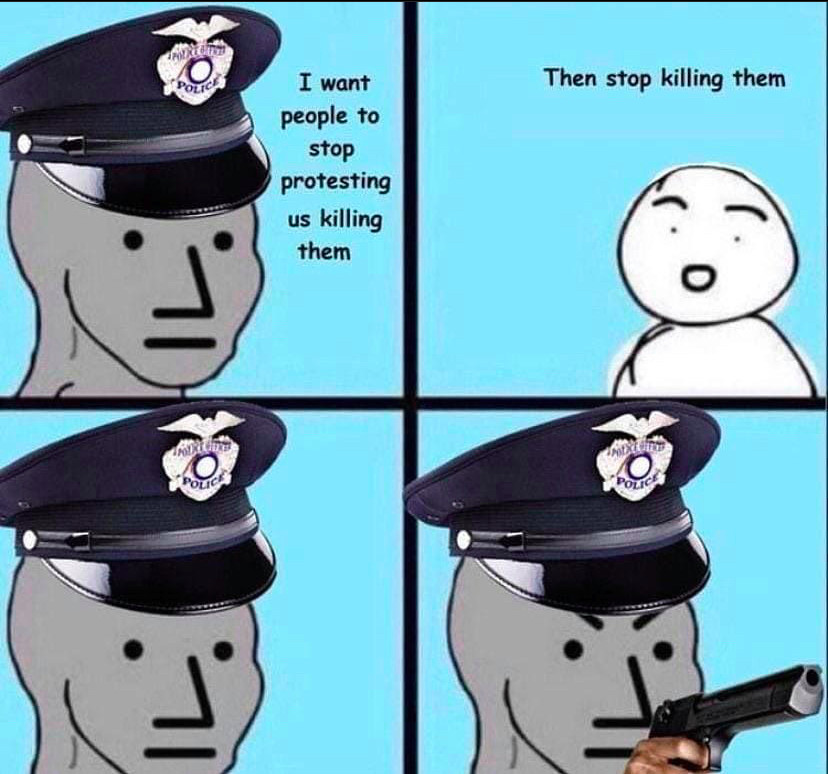

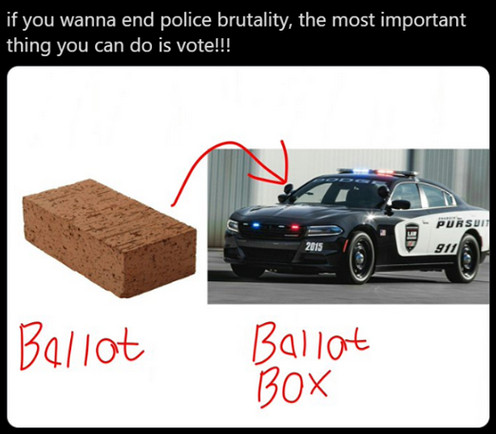

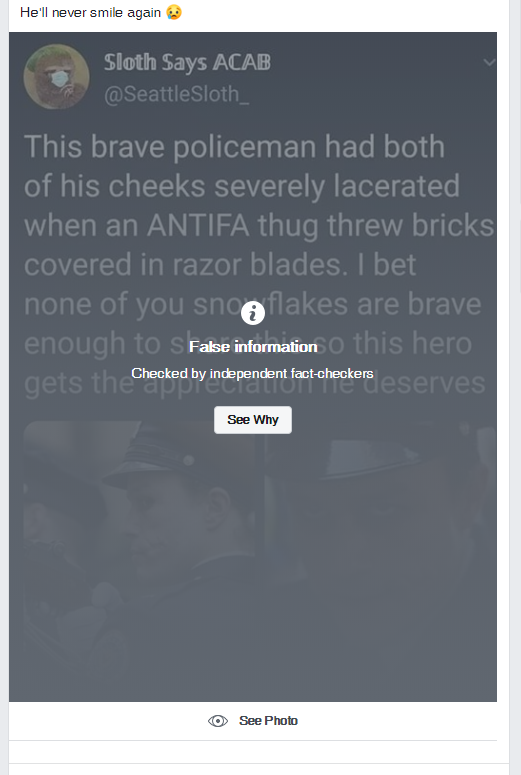

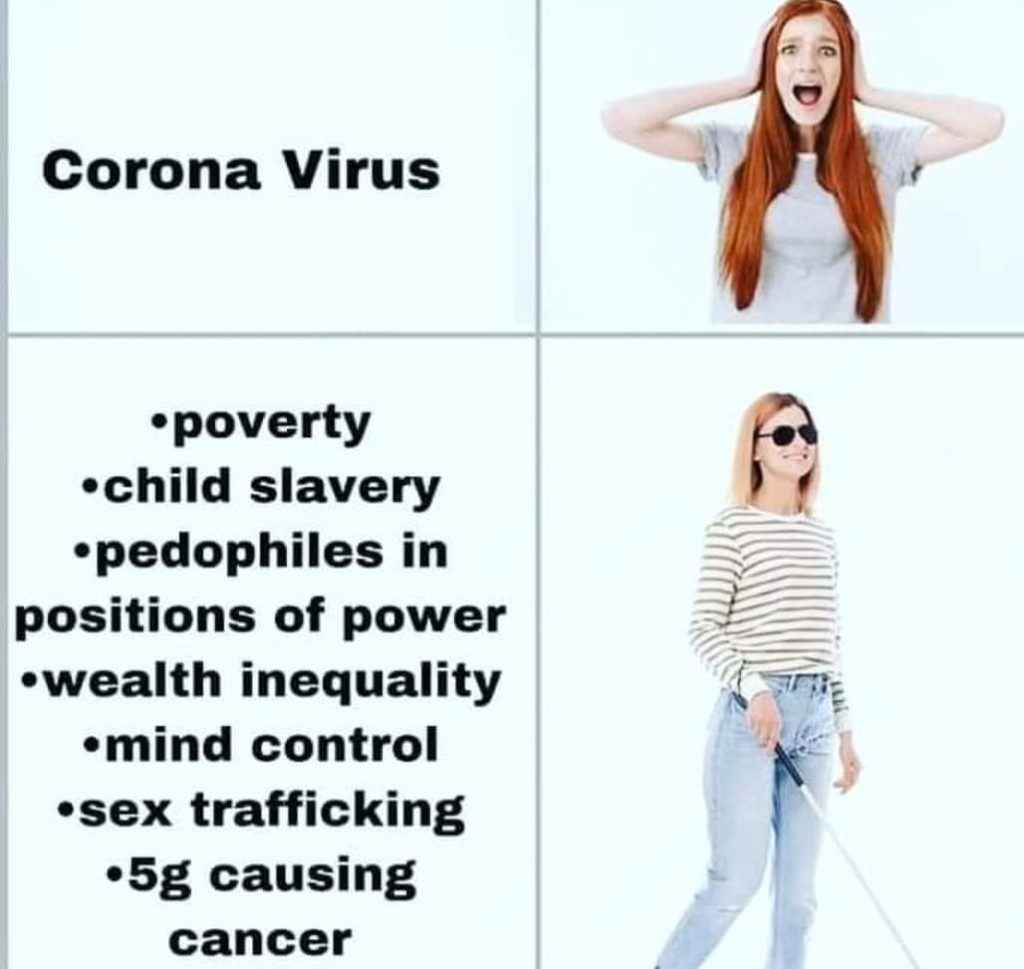

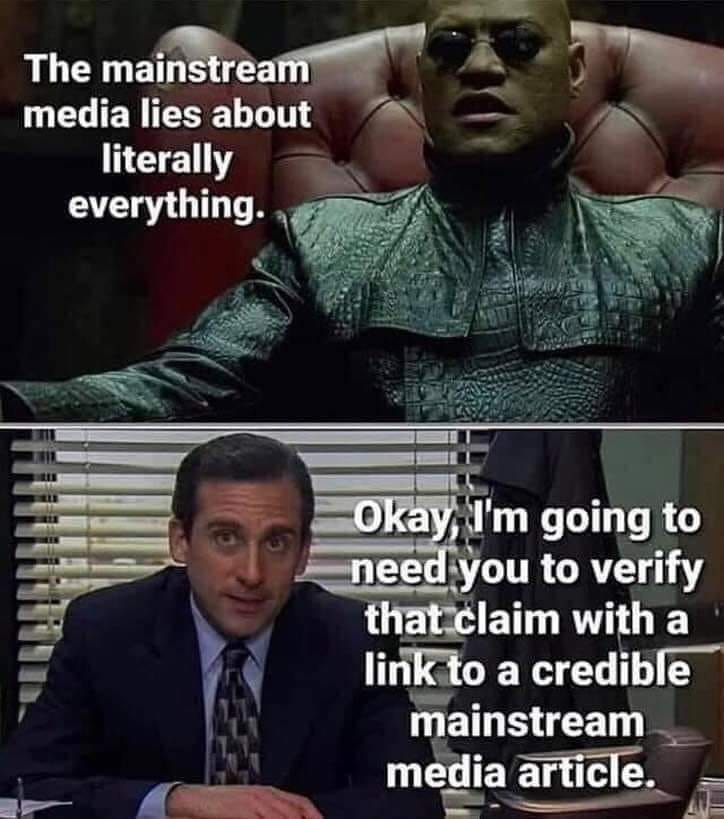

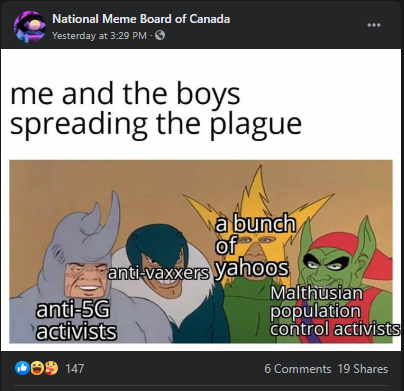

- A copy of the meme and related memes or party images

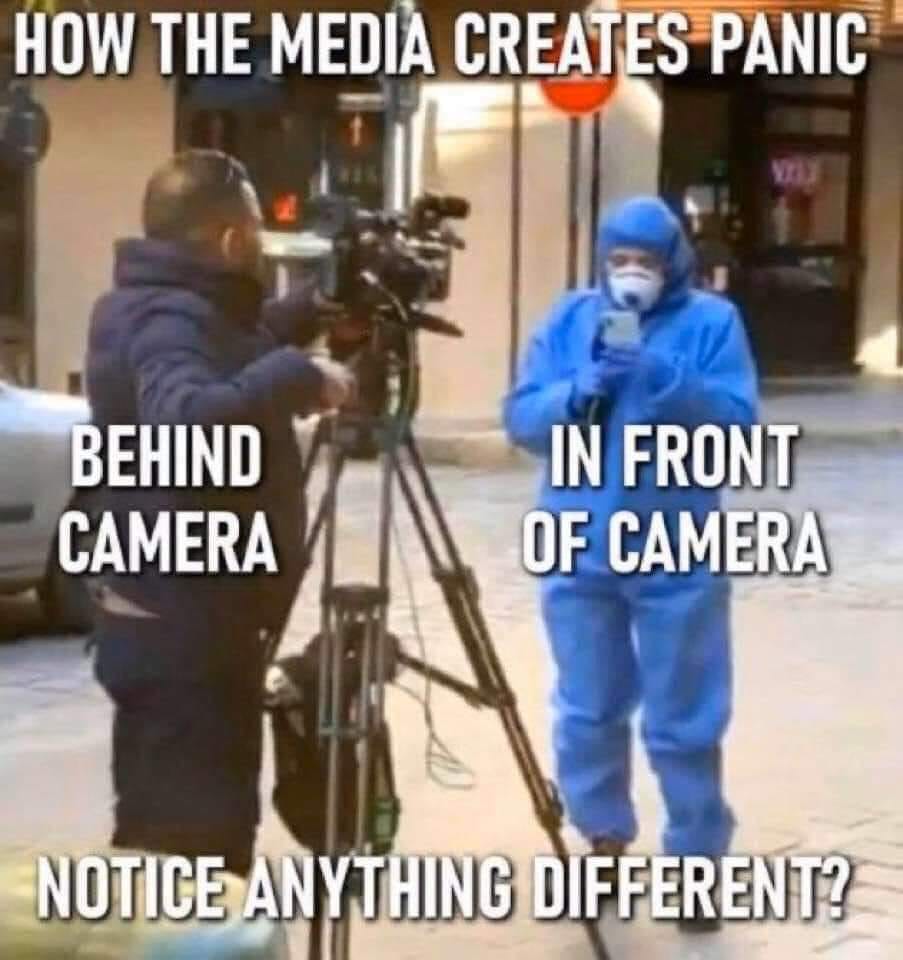

- A description of the image and its relation to a meme found on Know Your Meme or party brand

- The context / place you encountered it in or where you want to situate the meme

- An analysis of the visual argument of the meme:

- Analysis can focus on images as arguments, the meme’s agenda-setting function, policy dramatization, emotional appeal, image building for candidates, creating identification, connection to societal symbols, audience transportation, and ambiguity; or, in relation to brands, mediatization, mis/disinformation, or memetic theory.

Submissions should be 750–1,000 words in length. In writing your submission, please use the existing entries on the 43rd election’s memes as a reference.

Important: Before submitting your entry, please contact Fenwick McKelvey (email: fenwick-dot-mckelvey-at-concordia-dot-ca) with your preferred meme to ensure there is no duplication. Include “GCEM” in the subject line. Approved submissions can be emailed to the same address.